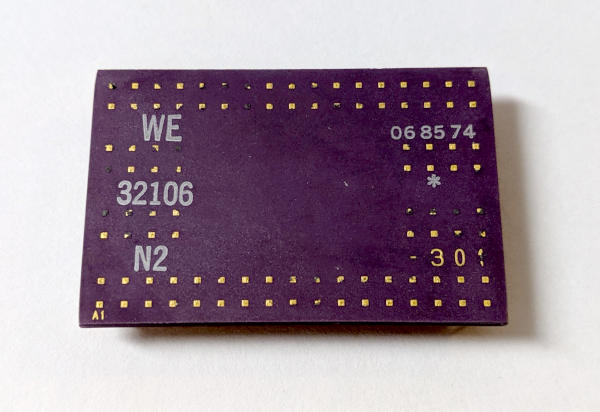

After what feels like an inexcusably long time, the WE32106 Math Acceleration Unit (MAU) has been merged into the main SIMH tree, and the 3B2/400 simulator has support for it.

This project was one of the most tedious, boring, and yet educational projects I have worked on. Join me now as I recount the harrowing details.

In The Beginning

Way back in September 2018, almost nine months ago (coincidentally, the

time it takes to make an entire human being from scratch) I posted an

article here titled “3B2 Math

Acceleration”, in which I discussed my

first few halting steps at what would later become the WE32106 Math

Acceleration Unit. It was, to put it bluntly, incredibly naive. I

discussed my attempts at using native floating point math to emulate the

MAU, and how, by luck and happenstance, the native long double type in

GCC on a 64-bit Intel x86 just happened to use IEEE-754 extended double

precision format encoding. I reasoned that I could probably get away with

just doing native math and wipe my hands of the whole situation.

This was, of course, a startlingly bad and stupid idea.

A small prototype of the code worked. But it worked for me, on Linux, on a particular version of GCC, on a particular kernel, on a particular day. There was no guarantee it would work on any other platform, or any other version of GCC, or on any other compiler for that matter.

Not only that, but the underlying algorithms used for rounding and truncation would of course have been IEEE-754 2008, if I got lucky. But the WE32106 is not IEEE-754 2008. It implements a draft version of IEEE-754 1985.

So, after a few haltingly bad attempts, it was literally back to the drawing board.

Starting Again

I won’t rehash the fundamentals of floating point. There are many, many places on the web to read about floating point representation that will do much better justice to the subject than I possibly could, and I encourage you to go read up about the subject if you’re interested.

But, suffice it to say, a portable, reproducible way of implementing IEEE-754 1985, plus the quirks of the WE32106, could only be accomplished by doing floating point emulation using integer math.

As with all things it rarely makes sense to reinvent the wheel, so I immediately began a search for a portable library that I could use. There were three problems with this approach straight off the bat:

- Any floating point math emulation library would try to implement a current version of the IEEE-754 spec, and

- A floating point emulation library would include all sorts of routines for functions and data types the WE32106 does not necessarily support, and

- Integrating a whole library into the SIMH build system is non-trivial and would surely get pushback from the upstream maintainers, with good reason.

Keeping these points in mind, I made a decision: I would find a library to use, but only take from it what I needed. This would necessitate finding one with a compatible license.

SoftFloat to the Rescue

In the end, I settled on the SoftFloat library written by John Hauser. It met all my needs, and version 2c had a license that I felt was most compatible with the requirements of SIMH. Further, it’s broken up pretty well into very understandable chunks, which would make porting it to SIMH fairly easy.

Easy, yes. But oh, oh so tedious.

I set about essentially copying and pasting just the functions that I needed, altering them to fit the SIMH coding style that I’ve adopted. I renamed a lot of variables, and changed everything to use SIMH’s standard portable data types. And I tried to do all of this without introducing stupid transcription errors.

It took ages.

I spent long nights just copying, pasting, and editing code. It was the most boring work I’ve done on the 3B2 emulator to date. But at the same time, it was very educational. I got to see the gory details of every single function. Whenever I wondered “why the hell is it doing that?” I had to take a moment to go review the basics of floating point math to try to understand it.

I could not and did not dedicate myself to this task full time. Weeks would go by without my even touching the 3B2 emulator, which made the whole thing go even slower.

But, one by one, I moved over the functions I needed.

Testing Along The Way

I didn’t want to do everything in one go and hope for the best, so I made

use of the 3B2’s DGMON diagnostics along the way to determine in what

order I would implement the functions. Each new DGMON diagnostic test

required some specific piece of functionality, so I would iterate over

these steps, in a kind of homage to Red-Green-Refactor test driven

development:

- Run the

DGMONMAU diagnostics until the first one fails. - Examine what instructions it tried to execute.

- Implement the minimum functionality necessary to make the diagnostic pass.

- GOTO 1

This ended up being a pretty reasonable way of going about it. The

first set of diagnostics test the basic registers of the MAU. The next

set exercise the MOVE functions of the MAU that convert between data

types. The next set start working on math functions, and so on.

As I went, I faithfully built up my own heavily modified working copy of SoftFloat 2c, stripped down to the minimum set of functions necessary. Each time I added a function, I commented it to say what it did and that it had been derived from SoftFloat, and ensured that John Hauser’s copyright was included in the code. I wanted to make it absolutely clear what was my own work, and what was not — not only because it was a requirement of the license, but because it’s the right thing to do.

I don’t know how many hours this took. I don’t really want to think about it. But it took a while.

The Debuggening

By the time the MAU diagnostics all passed, I felt pretty good about things. But, the diagnostics are not exhaustive. They test basic functionality, like “Does 1.0 / 0.0 raise an exception?” and “Is 1.0 + 1.0 = 2.0?”. These are not edge cases. These are just tests to make sure a MAU hasn’t suffered a catastrophic failure. So, I needed a real set of tests.

I began to ask around online looking for test programs. But, to complicate things, I had one major requirement: They had to use K&R style C, not ANSI C, because the AT&T C compiler is not an ANSI compiler. This kind of limited my options.

I eventually settled on the whetstone floating point benchmark, and a test suite called paranoia, both of which exercise a lot of IEEE-754 edge cases. Moreover, I made sure to compile them both on my 3B2/310 with a real physical MAU and on the simulator, so I could watch them run side by side and compare.

This was enlightening.

Around about 1.79769313486231570e+308

There were a lot of problems. A lot of problems.

I had to tackle them one by one, adding a ton of debugging statements to the benchmarks and test suites as I went so I could try to understand what was going wrong. I have since become an expert in this very stupid pattern:

double d = 1.01;

unsigned int *raw = (unsigned int *)&d;

printf("%e = 0x%08x%08x\n", d, raw[0], raw[1]);

That’s how you get the raw underlying bytes of a double and print them

out for debugging. Good old C!

After many judicious applications of this pattern (along with assigning

values to raw[1] and raw[2] for the inverse operation) I was able to

track down, piece by piece, where things were being calculated

incorrectly. This step, too, took ages.

In the end, what I had the most problems with was rounding. A computer, of course, does not have infinite digits of precision, so you have to cut off very long binary representations of floating point values somewhere.

All math in the WE32106 is done on extended double precision values that are treated as if they had infinite precision. When a result is returned, it is then converted either to an 80-bit, 64-bit, or 32-bit representation, with the appropriate rounding. It turns out, though, that SoftFloat implements rounding in a slightly different way than the WE32106 implements rounding, which caused many sleepless nights as I tried to get my very average brain to understand a very smart person problem.

Sticky Bits

To make a tremendously long story short, it all came down to sticky

bits. When rounding, any bits chopped off lose information. To decide

how to round, some of these bits are treated specially, and essentially

they all get OR’ed together into one bit called the sticky bit. This

bit captures only one piece of information: Did I lose any precision after

the second bit that got chopped of? Were any of those lost bits a 1, or

were they all 0?

This one single bit of information is used, when rounding, to make a choice when you have a tie. Do I increment the remaining bits? Or do I just leave them chopped off?

Well, SoftFloat 2e made a choice here that is totally valid, but not exactly the same choice that the WE32106 made. I had to track that down, understand the problem, and fix it. Eventually, after a lot of trial and error and even building a spreadsheet, I figured it out: When going from an intermediate extended double precision value to a chopped off extended double precision value, I had to remember the sticky bit, and then use it again when going from the chopped off extended double precision value to a rounded double precision value (or a rounded single precision value). That was what I was missing.

Finally, only a few days ago, I squashed the last rounding bug. I got identical results from the 3B2 simulator and the real 3B2.

I did a little dance.

In Conclusion

What did I learn from all this? A few things.

First, I learned a whole lot about floating point math, about IEEE-754, and about how amazing computers really are.

Second, when dealing with floating point, even if you do have someone else’s library, there’s no guarantee it does things exactly the same way you need it to. That doesn’t mean it’s wrong or broken, it just means it makes different decisions or follows a slightly different version of a spec than you need.

And finally, I learned a lot of patience. So much patience.

I’m glad I did this, but I’m also glad I don’t need to do it again.

Comments